ADAS & Autonomous Vehicle Technology Expo has drawn to a close at San Jose McEnery Convention Center in California, and there was still plenty for visitors to see on Day 2, with products and innovations on show from the likes of ACE, Xylon, LeddarTech, AB Dynamics, NI, Trimble, Humanetics and Seagate…

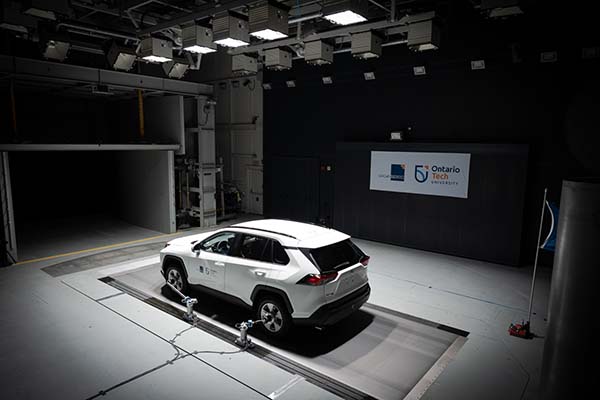

Moving ground plane enables full vehicle aerodynamic testing in a controlled environment

ACE – Ontario Tech University is showcasing its moving ground plane on Day 2 at ADAS & Autonomous Vehicle Technology Expo.

“The enhancement project features three main areas that have been upgraded to support the installation of the moving ground plane (MGP),” explained marketing manager, Andrew Karski, live at the expo. “The project involves enhancements to the wind tunnel, airflow quality and acoustics, and the addition of advanced aerodynamic force measurement devices (MGP, drag links and wheel hubs, force measurement systems) and some building modifications and enhancements.”

He continued, “the moving ground plane enables the air flow under the vehicle to replicate as closely as possible the aerodynamic flow of a vehicle on the road. In this way, full vehicle aerodynamic testing in a controlled environment is conducted in a way that has more value to OEMs.”

The moving ground plane boasts impressive specifications, measuring 1178-1696mm in width, with a maximum speed of 210km/h, belt force measurement of up to 8000N per wheel and weighing 150,000lbs (68038kg). It will give both the motorsport industry and OEMs the tools to conduct research in a high-tech environment, helping companies and researchers create new, energy-efficient products such as active aero, in addition to maximizing energy efficiency and reducing carbon emissions.

“Many of the autonomous vehicle and ADAS companies in California have been conducting testing at ACE for years,” said Karski. “ADAS & Autonomous Vehicle Technology Expo is an opportunity to meet with our current clients and develop new relationships in person where these companies are located and to present our newest test capability. In addition, ACE has participated in Automotive Testing Expo in Novi, MI for a long time and we knew from that experience that this expo would have value for ACE and our customers.”

HIL live demonstration: when a physical ECU meets a software simulator

Electronics specialist Xylon is demonstrating live its complete demo HIL system that converts sensory data generated by a software simulator into real automotive stimuli for the attached parking assistance ADAS ECU. To develop the demo and explore different methods of connecting hardware ECUs to simulation software, Xylon has teamed up with rFpro, an expert in driving simulation software and an exhibitor at the expo.

According to technical marketing director, Gordan Galic, the demo setup consists of the PC that runs rFpro’s advanced simulator, Xylon’s logiRECORDER Automotive HIL Video Logger in the Smart I/O mode and Xylon’s Surround View ECU.

“rFpro simulates the car model, its surroundings and four 1920×1080 pixels at 30fps video cameras,” explained Galic. “Camera image generators convert native RGB888 pixels into common sensors’ YUV420 pixels and add a fish-eye lens distortion typical for optics in this ADAS application. Xylon’s data transfer plug-in connects to rFpro’s interface for externals, originally developed as an interface for soft ECU models, and hands the simulated camera videos over to the streaming application. Video data is formatted into standard MIPI packets typically generated by real-world sensors and is timestamped before being transmitted to the logiRECORDER via a 10 GigE Ethernet link.”

Galic continued, “the logiRECORDER de-encapsulates Ethernet packets, and based on encoded timestamps, converts synthetic videos to four native automotive GMSL2 interfaces. The ECU receives raw sensory data through four video inputs regularly used for GMSL2 video camera connections; fully unaware of the synthetic nature of its inputs, it generates a surround view video of the simulated scene.

“The ECU displays complete 360° surroundings of the car by stitching together video inputs from the simulated cameras placed on the car model’s front grid, the rear and within the side-view mirrors. The car model and its surroundings can be seen from different perspectives set up by a mouse-controlled virtual flying camera.”

The demo also integrates the loop-back feature. The ECU’s responses connect back to the logiRECORDER through the automotive CAN interface, and it sends them via Ethernet to the simulator. It turns the complete demo setup into a fully featured virtual test drive simulator, seeing that the simulator can adapt simulation scenarios based on the received ECU’s responses.

Instead of working in a special diagnostics mode, the ECU runs production firmware. Inputted raw data is generated to a level that enables full sensor fusion and perception testing, as well as full ECU controls. The Surround View ECU can be exchanged with other types of ECUs.

LeddarTech showcases raw-data sensor fusion and perception for ADAS and AD systems

On Day 2, LeddarTech is demonstrating its raw-data sensor fusion and perception solution, LeddarVision.

“Sensor fusion is the merging of data from at least two sensors,” explained company representatives at the expo. “In autonomous vehicles, perception refers to the processing and interpretation of sensor data to detect, identify, classify and track objects. Sensor fusion and perception enables an autonomous vehicle to develop a 3D model of the surrounding environment that feeds into the vehicle’s control unit.”

While sensor fusion (fusion of data from different sensors) and perception (online collection of information about the surrounding environment) is already in use in current ADAS and AD applications, LeddarTech says the technology still has one major drawback: each detection is based on suboptimal information (sensing data from the camera, radar, lidar, etc), resulting in partial or even contradictory information that can lead the system to make a wrong decision.

Currently, the most common type of fusion employed by software providers is object-level fusion. This approach involves perception being done separately on each sensor. For LeddarTech, this is not optimal because when sensor data is not fused before the system plans, there may be contradicting inputs. For example, if an obstacle is detected by the camera but not by the lidar or the radar, the system may hesitate about whether the vehicle should stop.

Instead, LeddarTech employs a raw-data fusion approach: objects detected by the different sensors are first fused into a dense and precise 3D environmental RGBD model, then decisions are made based on a single model built from all the available information. Fusing raw data from multiple frames and multiple measurements of a single object improves the signal-to-noise ratio (SNR), enables the system to overcome single sensor faults and allows the use of lower-cost sensors. This solution provides better detections and fewer false alarms, especially for small obstacles and unclassified objects.

New next-gen ADAS targets for developing and testing active safety systems

AB Dynamics, together with sister company, Dynamic Research Inc (DRI), is previewing two new next-generation ADAS targets at this week’s ADAS & Autonomous Vehicle Technology Expo in San Jose, California.

The first is the Soft Motorcycle 360 powered two-wheeler (PTW) target while the second is the Soft Pedestrian 360. The targets will be used for developing and testing active safety systems by vehicle manufacturers, test houses and regulatory bodies.

The Soft Motorcycle 360 complies with Euro NCAP 2023 and ISO 19206-5 draft requirements. In conjunction with AB Dynamics’ LaunchPad 80 platform, the target is capable of an 80km/h top speed, which means it can be utilized for all upcoming 2023 Euro NCAP test scenarios involving motorcycles.

The Soft Pedestrian 360 is ISO 19206-2 compliant and brings a new level of realism to the vulnerable road user (VRU) testing market. It is achieved through sophisticated articulation of both the upper and lower limbs for more realistic motion, including the hip, knee, shoulder and neck.

Using the LaunchPad VRU platforms and path following software, the targets are precisely controlled and choreographed with a test vehicle to within an accuracy of 2cm. This enables pre-programmed test scenarios, such as prescriptive Euro NCAP tests, to be conducted quickly and repeatably.

The Soft Motorcycle 360 will be officially launched later this month on September 14, 2022.

The Soft Motorcycle 360 and Soft Pedestrian 360 have been designed and engineered by AB Dynamics Group company DRI in California. The company provides research and testing services for the world’s leading OEMs, completing hundreds of tests per year, including FMVSS, NHTSA NCAP and Euro NCAP tests. DRI has leveraged its extensive hands-on experience with test targets and equipment to design, develop and produce a range of best-in-class surrogate targets, such as the Soft Car 360.

ADAS recording-equipped vehicle, and ADAS replay and HIL validation

NI is on-site at ADAS & Autonomous Vehicle Technology Expo in San Jose to showcase its latest innovations for virtual validation, including its ADAS recording-equipped vehicle and its ADAS replay and HIL validation.

By combining its strengths with those of trusted third-party experts, NI works with customers to build a connected ADAS and automated driving (AD) validation workflow to get vehicles to market faster. The company recently launched a fleet of ADAS vehicles equipped with the high-performance in-vehicle data recording and storage solution from NI and Seagate Technologies, with integration services from ADAS experts Konrad Technologies and VSI Labs. The collaboration enables a connected workflow by combining best-in-class technologies across the global ecosystem.

An ADAS recording-equipped vehicle will be on display in San Jose to show attendees how these industry leaders are working together to improve the data challenges facing ADAS and autonomous driving engineering teams.

NI is also demonstrating its new ADAS replay and HIL solution aggregating recorded or synthetic data to validate perception software on ADAS ECUs.

Built on its open system architecture, NI creates a seamlessly connected ADAS and AD workflow from data recording to replay and hardware in the loop (HIL). This single toolchain across the product development workflow incorporates open, modular hardware and test automation software, and connects to AV simulation environments to increase productivity and reuse.

Brenda Vargas, senior solutions marketing manager, automotive (transportation: validation) at NI, revealed live on Day 2, “It is really important for us to show at an ADAS-focused event, talk to OEMs and follow trends, as the AV industry is changing so fast, with lots of startups. The Bay area is a good gathering of these companies. The NI system is a customized, open platform and its ecosystem helps them go to market faster. It provides a complete solution, bringing best-in-class partners and helping to break the silos into their workflow.”

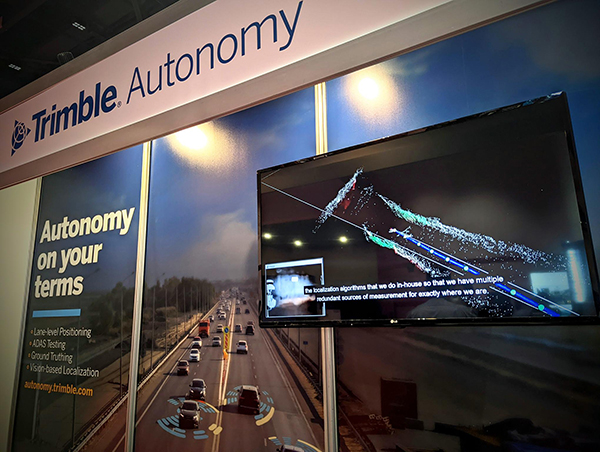

High-accuracy global precise point positioning (PPP) and orientation in nearly any environment

At the inaugural expo in San Jose, Trimble is showcasing its proprietary RTX technology that can deliver 2cm accuracy for certain applications, with better than 10cm performance for lane-level positioning on the road.

According to Marc Davis, marketing communications at Trimble, high-accuracy positioning and orientation are crucial to any safety-critical application. If onboard relative sensors such as radar or cameras go offline or are impeded by inclement weather conditions such as a driving snowstorm, absolute precise positioning still contributes data to the vehicle to help maintain lane-level positioning.

To date, the technology has enabled well over 30 million miles (48 million kilometers) of incident-free autonomous driving for OEMs.

Trimble’s autonomous solutions are customized to meet the unique requirements of each of its OEM and Tier 1 and 2 customers. The solutions are comprised of multiple positioning and orientation technologies that work in concert with one another to deliver highly accurate, reliable positioning in nearly any road environment: augmented GNSS, inertial positioning and DMI can all be integrated to meet the dynamic challenges of varying driving environments around the world.

Trimble exhibited at the ADAS & Autonomous Vehicle Technology Expo in Stuttgart earlier this year, which Marc Davis says proved to be a good venue for the company to engage with OEMs and their extended technology ecosystem.

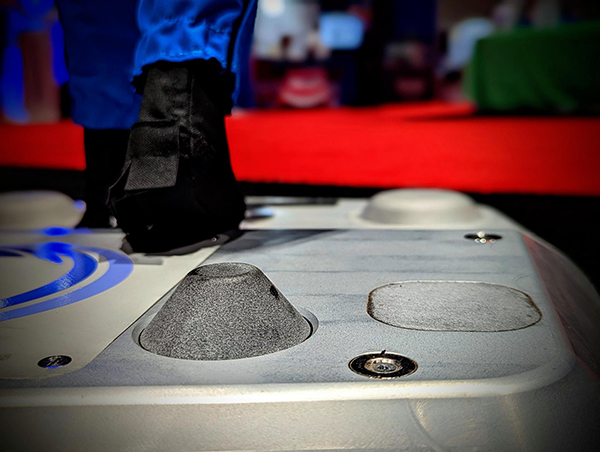

World’s smallest platform robot for VRU tests receives full Euro NCAP approval

On Day 2 of ADAS & Autonomous Vehicle Technology Expo, Humanetics is showcasing its comprehensive portfolio of active safety test equipment, including its ultra-flat overrunnable (UFO) platform robots, soft target vehicles and self-driving driving robots.

According to Kelli Baird, marketing and communications at Humanetics, “The company also supply services globally, allowing you to take your testing pretty much anywhere.” Its remotely operated, GPS-enabled UFOs allow vehicle manufacturers to test the latest advanced crash avoidance systems in real-world scenarios. “The products are designed by practitioners and are built to be durable – crash after crash,” Baird said. “They are easy to transport to different locations and come fitted with removable batteries to allow for uninterrupted testing.”

Baird continued, “Our booth shows three of the four UFOs we have in our portfolio, including the UFONano – which just received full Euro NCAP approval,” revealed Kelli. “This means that it is now the world’s smallest platform robot for VRU tests listed in TB029, as well as the only one that has the ability to turn on the spot. This allows the small, handy, state-of-the-art nano robot to replace the previous rope pulling systems, offering 2D maneuvers for realistic pedestrian behavior.”

The UFOs represent just part of the total active safety suite Humanetics offers, further proving the commitment it has to protecting humans.

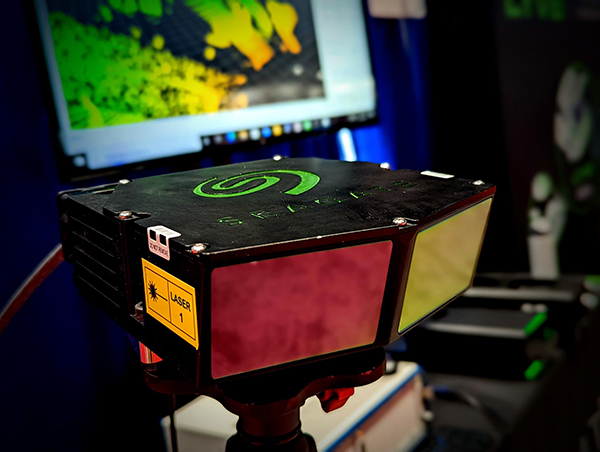

High-performance, reliable and low-cost lidar for next-gen ADAS and AVs

Renowned hard drive specialist, Seagate Technology, is in California to demonstrate its recently announced Gen 6 lidar, a high-precision, high-performance 120° FoV lidar designed for next-gen ADAS and autonomous vehicle applications.

David Burks, general manager at Seagate, revealed live at the expo, “Seagate has spent the last 15 years creating a new hard drive technology (heat-assisted magnetic recording), mounting tiny lasers to a hard drive read/write head to increase storage capacity on a hard drive.

“With our background in lasers and photonics, the company has significant synergies in lidar tech for autonomous vehicles and has designed a lidar that uses similar suppliers to a hard drive. We can therefore develop a lidar focused on gaps in the market, a complex and high-volume mechanical device designed for manufacturability, cost and reliability.”

He continued, “if you can’t manufacture efficiently then it is not cost-friendly. Our challenge was [to] design for reliability and cost and withstand the rigors of riding in a car so it has disruptive cost points.

“Lidar works in the dark like radar and under adverse weather conditions, and has better resolution at range, for example with small objects. Different types of lidar have different types of range. Solid-state lidars are very affordable but the biggest challenge for solid-state lidar is range; it is difficult to have long range in solid-state lidar. It is a physics challenge.

“The Seagate mechanical lidar is going to fulfill the need for long-range lidar in the automotive sector,” Burks concluded.

Martin Booth, Seagate lidar business development executive, agreed, “Most OEMs need a 200m range and that’s increasing in the future; range depends on how fast you want to go and how far the braking distance is. Most OEMs want to drive at motorway speeds in Europe and avoid small objects on the road.”

Seagate is also showcasing its Seagate Lyve Mobile storage arrays that, according to Burks, “allow mass amounts of storage in test cars to capture invaluable field data used in training ADAS decision systems.”

According to Booth, the company chose the ADAS & Autonomous Vehicle Technology Expo to showcase its products as “the show is focused on a hot topic, ADAS – and Silicon Valley is the right region to do this show. We’ve had a lot of good OEM meetings so far.”