Nitish Sanghi develops and deploys safety-critical perception and diagnostics for industrial autonomous vehicles. In this feature, he discusses the common issues that arise in autonomous vehicle systems, often found in real use cases, rather than in simulation or bench testing. These challenges highlight that while individual components may perform correctly in isolation, maintaining alignment and reliability across the full system under real-world conditions remains a critical hurdle

Why vehicle integration still surprises teams

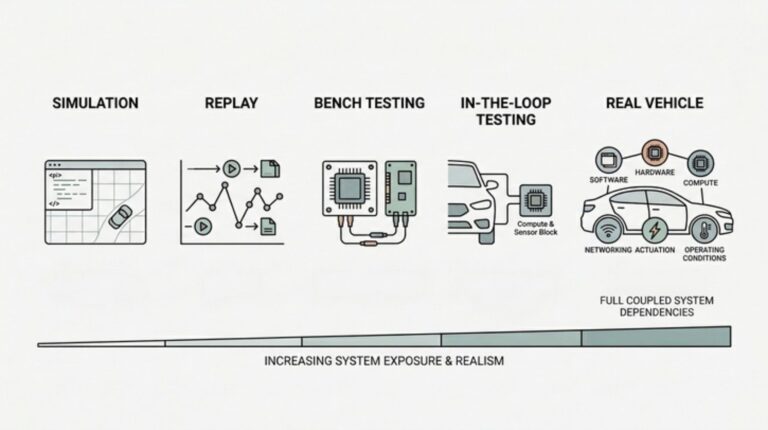

A stack can look stable in replay and still brake late the first week it runs on a real vehicle. By then, most AV programs have already been through bench, simulation, and in-the-loop work, with production code running on real processors and parts of the vehicle brought into the loop. Teams often have good reason to believe the system is ready. When the vehicle still surfaces a problem, the issue is usually not missing validation. The earlier stages exercised only part of the system that would eventually have to work as one on the road.

Bench tools are good at validating components and modeled behavior, but they do so in a simplified setting. Once the stack is on the vehicle, clock drift, bus traffic, vibration, thermal cycling, service history and platform-state changes stop arriving one by one. They show up together. Road surface, weather, and contamination can make those interactions less stable still, pushing the system toward boundaries that looked cleaner on paper. For many AV programs, the hard question is no longer whether an individual module can work in principle. It is whether the vehicle-level system can stay aligned under real operating conditions long enough to deploy.

When time and geometry start to drift

Time is often the first hidden dependency to surface, and timestamp jitter or synchronization drift is a common way it shows up. Perception, localization, prediction, and control may each be correct in isolation and still disagree about when the world state is true. Small offsets in sensor timestamps, clock domains or communication delays can degrade fusion, skew localization and leave the stack working from a world model that is already slightly out of date. The software may not fail outright. It may simply brake late or hand off unsteadily. These effects are easy to miss in staged testing because replay preserves one realized order of events and simulation usually runs with cleaner timing than the vehicle. On the vehicle, drift, buffering, bus traffic and load arrive together, and a small timing error stops looking small.

Calibration is the spatial version of the same problem. It is often treated as fixed after setup, even though vehicles spend their lives under vibration, thermal cycling, service events and small physical shifts. Those shifts may be minor and still matter at the distances and object sizes autonomy systems have to reason about. The dangerous case is usually not an obvious fault. More often, the outputs still look plausible while fusion, localization, prediction and control are all working from slightly wrong geometry. Intrinsic and extrinsic changes can both contribute, and the effect builds quietly until safety margin is gone.

When full load changes system behavior

Even when timing and calibration look nominal, the stack still has to hold up under full production load. Compute contention often stays hidden until the system is running on the vehicle as it would in service. Bench testing may show acceptable average performance, but vehicles do not live at the average. Dense traffic, sensor bursts, logging, networking, health monitoring and recovery paths can all compete at once for CPU time, GPU cycles, memory bandwidth and interconnect capacity. In many cases, the bottleneck is not raw compute alone. It is how quickly data can move through the system. Because autonomy modules depend on one another, a delay in one stage rarely stays local. It flows downstream into stale world models, missed control deadlines and lost safety margin.

When the vehicle and stack disagree

State-machine mismatches are often the most confusing failures in the stack. The autonomy stack thinks the vehicle is ready to execute a plan, while the platform is degraded, recovering from a fault, or no longer accepting commands after a timeout or reset. The reverse can happen too: the platform reports nominal status while autonomy software is still reasoning from stale assumptions about mode or actuator availability. These mismatches usually surface during enable, handover, fault recovery or re-entry after a reset. Nothing looks obviously broken, but commands get rejected, the vehicle stops abruptly, or a plan that makes sense in software is simply unavailable on the platform. At that point, the platform and the autonomy stack are no longer sharing the same state.

None of this makes simulation, replay or bench testing any less important. They remain the right tools for validating components, interfaces and modeled behavior. Their limit is that they stop short of the full coupled system that appears when software, hardware, vehicle dynamics and operating conditions meet on the road. That has practical implications. Real-vehicle integration cannot be left only to test teams at the end of a program. It has to influence architecture, instrumentation, fault handling and test design from the start. A real vehicle does not just validate the stack. It reveals the system that was actually built.

Nitish Sanghi is a senior software engineer in autonomy perception at Cyngn, where he develops and deploys safety-critical perception and diagnostics for industrial autonomous vehicles. Previously, he integrated and tested autonomy software for Cruise’s Origin platform and worked on fail-safe autonomy systems at Argo AI. Earlier in his career, he built robotics and automation systems at Intuitive Surgical and Cogenra Solar. He holds an MS in robotics from the University of Michigan.

Related news, Muhammad Nauman Nasir of Mercedes-Benz on building safe, AI-driven, human-centered autonomous systems