The following is an overview of autonomous navigation research and development activities at the Technology Innovation Hub on Autonomous Navigation (TiHAN) at IIT Hyderabad, which has been exploring the combination of of lidar odometry with a mapping algorithm to create an HD map of the environment. On the map, the vehicle is localized in real time, which helps in self-driving vehicles’ navigation and path planning. Efficient algorithms are developed for obstacle avoidance during navigation. HD map-based navigation on autonomous vehicles suits controlled environments, and GPS denies scenarios. The proposed HD map-based navigation algorithm is implemented in real time on an AV, tested, and validated.

Introduction to autonomous vehicles (AVs):

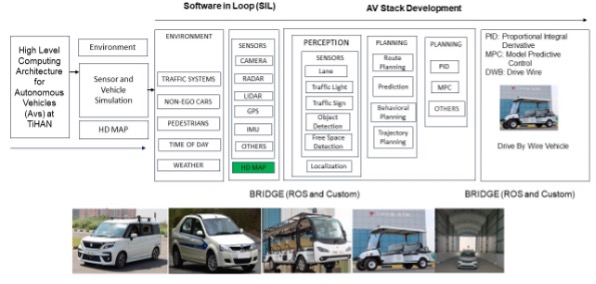

Autonomous vehicles, also known as self-driving cars, rely on a complex system of technologies to navigate and operate without human intervention. The building blocks of autonomous vehicles are shown in Fig. 1. Autonomous vehicles are equipped with various sensors to perceive the environment around them. These sensors include lidar, radar, cameras, ultrasonic sensors, etc., The perception system processes data from various sensors to comprehensively understand the vehicle’s environment. This involves object detection, classification and tracking algorithms to identify and monitor obstacles, road conditions and traffic participants. Autonomous vehicles rely on highly detailed and up-to-date maps to understand their position and plan routes. Simultaneous Localization and Mapping (SLAM) techniques are used to build and update maps in real time while simultaneously determining the vehicle’s location within the map. Autonomous vehicles use sophisticated control algorithms to operate safely and efficiently. These algorithms receive input from the perception system and make decisions on acceleration, braking and steering. AI and machine learning are crucial in self-driving cars. Machine learning models are used to improve the perception system, predict the behavior of other road users, and optimize driving strategies. Autonomous vehicles must plan a safe and optimal path to their destination while considering various factors such as traffic rules, road conditions and potential obstacles. Decision-making algorithms evaluate actions based on sensor inputs and select the most appropriate response. Communication systems enable autonomous vehicles to exchange information with each other and with intelligent infrastructure (V2X – vehicle-to-everything communication). This connectivity enhances safety and efficiency on the road. Autonomous vehicles often incorporate redundant systems and safety features to minimize the risk of accidents. These include backup sensors, redundant computation units and fail-safe mechanisms. An intuitive interface is essential for interacting with passengers and pedestrians. It provides information about the vehicle’s status, upcoming maneuvers, and actions. Deploying autonomous vehicles requires appropriate regulations and legal guidelines to ensure safety and compliance with the law.

Building autonomous vehicles is a complex interdisciplinary effort that involves expertise in robotics, computer vision, artificial intelligence, control systems, and more. As technology advances, the capabilities and reliability of autonomous vehicles will continue to improve, eventually leading to their widespread adoption on public roads. Map-based navigation is a critical component of autonomous vehicles (AVs), enabling them to plan routes, avoid obstacles and make informed driving decisions. AVs rely on sophisticated mapping and localization technologies to operate safely and efficiently. AVs use highly detailed, high-definition maps beyond traditional navigation maps. HD maps include precise information about road geometry, lane markings, traffic signs, traffic lights, curbs, and other critical elements. These maps are typically created using specialized surveying techniques, lidar and other sensor data to achieve centimeter-level accuracy. AVs use various sensor inputs, such as GPS, lidar, cameras and radar, to determine their precise position and orientation within the HD map. This process is known as localization, allowing the vehicle to know where it is on the map and accurately align its position. Once the AV knows its position and destination, it employs path-planning algorithms to determine the best route. The path planning system considers factors such as traffic conditions, road rules, speed limits, potential obstacles, and other dynamic elements to create a safe and efficient path. AVs need to handle real-time updates to the map, such as road closures, construction sites, accidents and other unexpected changes. They receive this information through connected systems that provide live updates to the mapping database. AVs continuously analyze their surroundings using various sensors to detect and identify other vehicles, pedestrians, cyclists, and any potential obstacles on the road. This information is vital for the vehicle’s decision-making process and for ensuring safe navigation. Advanced AI and machine-learning algorithms are crucial in map-based navigation. These technologies help AVs improve their decision-making abilities, adapt to complex and unpredictable scenarios, and learn from their experiences to become safer and more efficient. AVs incorporate redundancy in their navigation systems to ensure safety. If one sensor or system fails, backup systems take over and keep the vehicle in control. Fail-safe mechanisms are designed to handle emergencies and bring the AV to a safe stop if necessary. AVs can communicate with intelligent transportation systems and infrastructure elements, such as traffic lights and signs, to enhance navigation efficiency and safety. Vehicle-to-infrastructure (V2I) communication allows AVs to receive real-time traffic signal information and optimize speed and time traffic light interactions. Overall, map-based navigation is a core component of autonomous vehicles, enabling them to navigate complex urban environments and interact safely with other road users. Continuous advancements in mapping, localization, perception and AI technologies are driving the progress of autonomous vehicle navigation systems, making them increasingly capable and reliable for real-world deployment.

Real-time lidar odometry and mapping and creation of HD map:

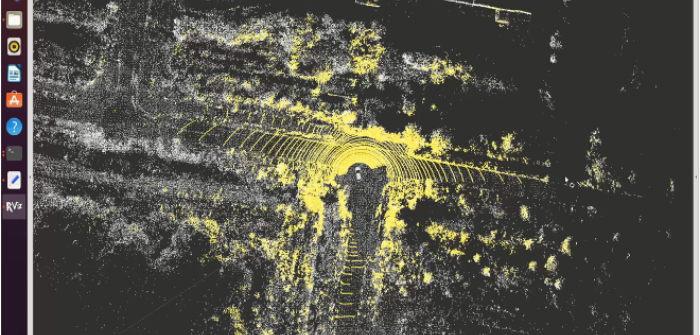

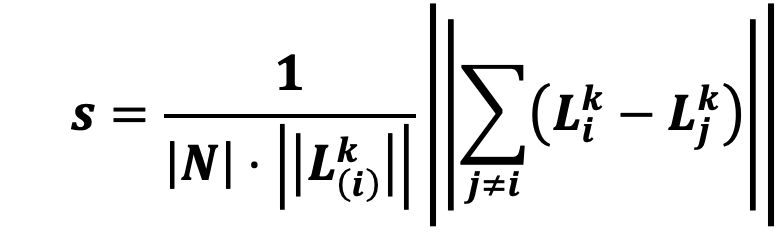

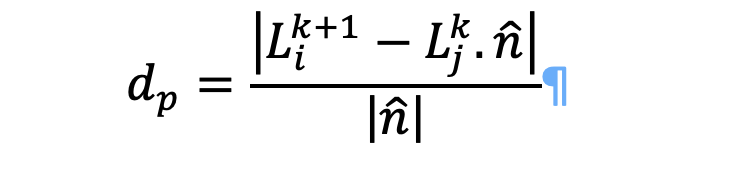

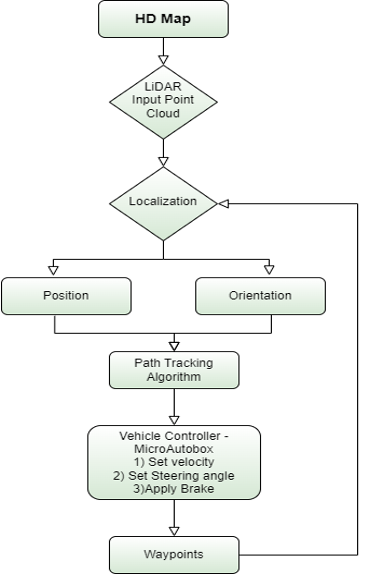

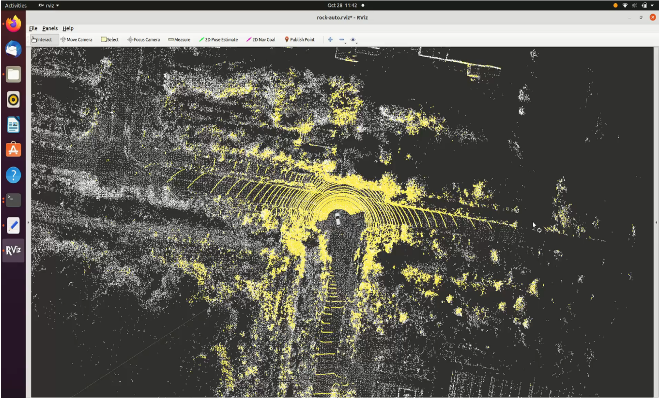

Simultaneous Localization and Mapping (SLAM) is a valuable technique used to determine the location of a vehicle on a map in areas where GPS signals are not available or are inaccurate. In such GPS-denied environments, lidar-based SLAM comes into play, using lidar sensors to perform mapping and localization. This point cloud data gathered by lidar is used to construct a map (Fig. 2). The constructed map enables autonomous vehicles to navigate effectively. The process of creating this map involves extracting feature points from the point cloud data. These feature points can be categorized as either planar or edge points. To identify edge points within a sub-region of the image, the two points with the maximum smoothness value are selected. Conversely, for identifying planar surface points, three points with a minimum smoothness value are chosen. The smoothness value is determined using the given below equation:  Lidar odometry is achieved by reducing the gap between the edge lines and the planar surfaces observed in two consecutive lidar frames. This process helps estimate the pose transformation from the previous frame, providing information on the movement of the ground vehicle. The vehicle’s motion is represented as translation in the ground frame and a yaw rotation. The distance from a point to the line is used as a crucial factor in this process, and it is calculated by:

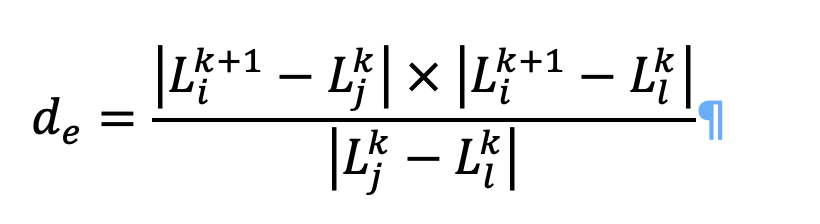

Lidar odometry is achieved by reducing the gap between the edge lines and the planar surfaces observed in two consecutive lidar frames. This process helps estimate the pose transformation from the previous frame, providing information on the movement of the ground vehicle. The vehicle’s motion is represented as translation in the ground frame and a yaw rotation. The distance from a point to the line is used as a crucial factor in this process, and it is calculated by:  The distance between the point to the planar surface is given as:

The distance between the point to the planar surface is given as:  By incorporating keyframes into the lidar odometry and mapping process, we can enhance its speed and efficiency. Keyframes are selected when there is a significant translation or rotational change surpassing a predetermined threshold. Utilizing keyframes for map updates allows us to maintain localization accuracy while improving computational efficiency. To further enhance computational efficiency, distortion compensation can be applied during both the odometry and mapping stages. This step helps reduce the computational cost associated with the process 3D maps constructed by this algorithm can be annotated to build the HD map. We have also verified our map using loop closure methods. The localization accuracy is nearly 2cm, and the computational time for mapping and odometry is 72ms for each frame, which is less than the lidar data acquisition rate, which is 100ms per frame.

By incorporating keyframes into the lidar odometry and mapping process, we can enhance its speed and efficiency. Keyframes are selected when there is a significant translation or rotational change surpassing a predetermined threshold. Utilizing keyframes for map updates allows us to maintain localization accuracy while improving computational efficiency. To further enhance computational efficiency, distortion compensation can be applied during both the odometry and mapping stages. This step helps reduce the computational cost associated with the process 3D maps constructed by this algorithm can be annotated to build the HD map. We have also verified our map using loop closure methods. The localization accuracy is nearly 2cm, and the computational time for mapping and odometry is 72ms for each frame, which is less than the lidar data acquisition rate, which is 100ms per frame.

Table I: Comparison of localization accuracy, computational time, average rotational error, and translational error with LOAM algorithm.

| Metrics | RT-LOAM (Present study) | LOAM |

| Localization error in cm | 2.11 | 2.13 |

| Computational time per frame in ms | 34 | 73 |

| Rotational error in deg/m | 0.0046 | 0.0045 |

| Translational error percentage | 0.70 | 0.71 |

HD map-based autonomous navigation of campus shuttle using only lidar (without GPS and IMU):

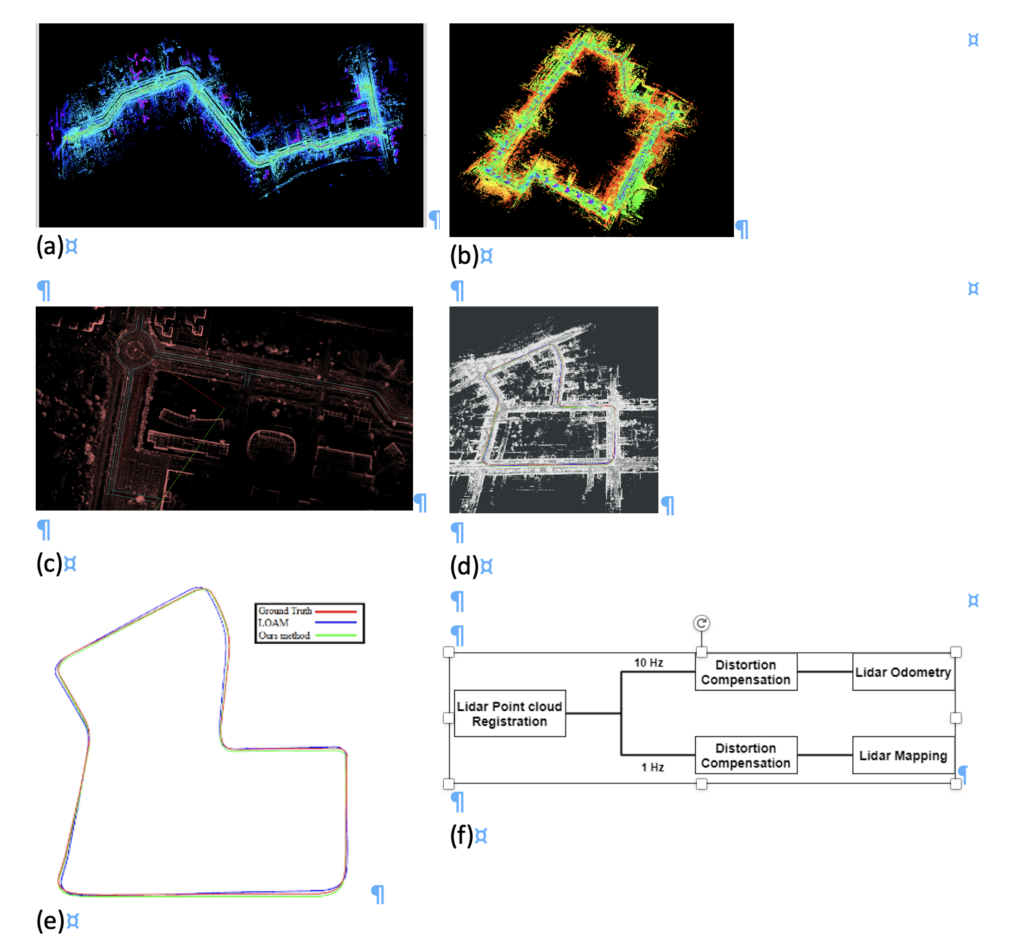

Once the HD map of an environment is created using lidar, we can load the map and use it for autonomous navigation without using GPS and IMU sensors, which is helpful in GPS-denied areas (Fig. 3). On the loaded map, we have localized the ego-vehicle in real time to get the current position and orientation of the vehicle. The objective of the matching process is to discover the best transformation that aligns the input scan with the prebuilt 3D map. This alignment is achieved using a score function using the normal distribution transform (NDT), which filters the points and identifies the optimal match with the prebuilt 3D map. Our main goal is to optimize the NDT evaluation metrics, such as the number of iterations, fitness scores and transformation probability, to match the current lidar point cloud on the built map for the real-time application of autonomous vehicles.

The 3D map built by lidar is used in navigation (Fig. 4). A map is loaded first, and the vehicle is localized using the NDT matching algorithm. Localization gives us the current position and orientation of the vehicle. With the orientation we determine the heading direction of vehicle with respect to the axis of map. The bearing difference is calculated using the target waypoint, current position and heading of the vehicle. Once the steer output is calculated the vehicle steer is controlled. When an obstacle is detected in between, the vehicle brakes accordingly. Map-based navigation is helpful in GPS-denied areas or where the GPS signal is weak. This can be also used in the autonomous parking in the indoor environment.

Summary & conclusions

In the present study, map-based navigation is tested and validated in controlled environment. Localization of autonomous vehicles in map-based navigation is a critical process that involves determining the vehicle’s position and orientation (pose) within a predefined map or environment. It enables the vehicle to navigate its surroundings and follow the intended path accurately. Lidar odometry and mapping is an essential component of autonomous vehicle systems, enabling precise localization and creating up-to-date maps of the environment. By leveraging the rich data provided by lidar sensors, AVs can navigate with high accuracy and respond effectively to dynamic surroundings, making them capable of operating safely and efficiently in various conditions. However, it’s worth noting that LOAM is often combined with other sensor data (e.g., cameras, GPS, IMUs) to achieve a comprehensive and robust navigation system for autonomous vehicles. Most modern autonomous vehicles use a combination of these techniques in what is known as sensor fusion, where data from various sensors are integrated to achieve robust and accurate localization. By combining the strengths of different methods, autonomous vehicles can navigate safely and precisely in complex environments, even when facing uncertainties and sensor limitations.

This paper was first presented by Dr. Rajalakshmi Pachamuthu at the June 2023 edition of ADAS & Autonomous Vehicle Technology Expo Europe, in Stuttgart, as part of the Vision, sensing, mapping and positioning session, on Wed 14th June 2023.