With autonomous vehicles becoming increasingly common on the roads, making them as safe as possible may mean going beyond the specs of the vehicles themselves – and upgrading the roadway infrastructure.

Eyedar, a low-power millimeter-wave radar sensor roughly the size of an orange, could provide radar-equipped AVs with inputs about surrounding traffic, extending and enhancing the vehicles’ sensing accuracy.

Placed at key points such as streetlights and intersections, the sensors could reduce the chances of AVs failing to pick up on emergent obstacles, even when such hazards are not within range of the vehicles’ onboard sensors or when visibility is limited.

Kun Woo Cho, a postdoctoral researcher at Rice University, who leads the Eyedar research project, introduced the technology at HotMobile, The International Workshop on Mobile Computing Systems and Applications, which took place in Atlanta, Georgia, last week.

“Current automotive sensor systems like cameras and lidar struggle with poor visibility such as you would encounter due to rain or fog or in low-lighting conditions,” said Cho, who works in the lab of Ashutosh Sabharwal, Rice’s Ernest Dell Butcher Professor of Engineering and professor of electrical and computer engineering. “Radar, on the other hand, operates reliably in all weather and lighting conditions and can even see through obstacles.”

Radar systems transmit signals in a given direction, and when a signal encounters an obstacle in its path, part of it reflects back to the source, carrying information about the obstacle. However, only a small fraction of the radar signal emitted is reflected back, and most of it bounces away from the source device.

In the context of self-driving vehicles, this means that a large fraction of the emitted radar signal scatters away from the vehicle, leaving an incomplete view of its surroundings. Pedestrians emerging from behind large vehicles, cars creeping forward at intersections or cyclists approaching at odd angles can easily go unnoticed.

Thanks to its placement on roadside infrastructure such as traffic lights, stop signs or streetlights, Eyedar can capture radar reflections that would otherwise be lost. The device’s unique structure enables it to determine the direction of reflected signals and report that information back to self-driving vehicles.

“It is like adding another set of eyes for automotive radar systems,” said Cho, who specializes in metamaterial antenna design.

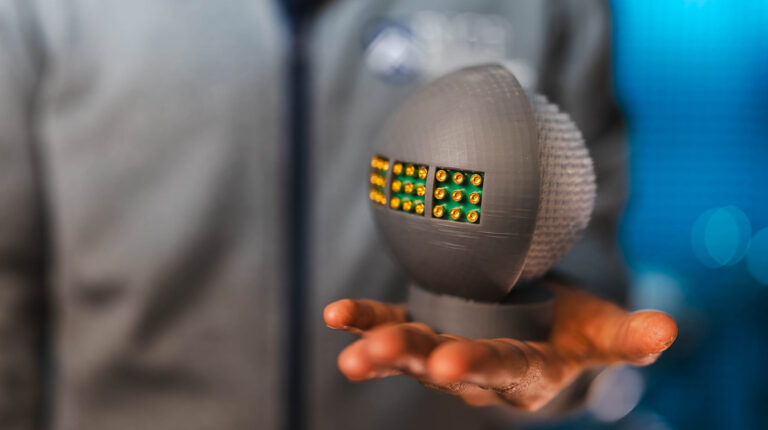

The design of Eyedar is inspired by the human eye. The device consists of two main components: a 3D-printed Luneburg lens made from resin, focusing incoming signals from any direction onto a focal point on the opposite surface; and an antenna array surrounding the lens on the back end, which functions like a retina, detecting the signal and determining its direction.

Whereas conventional radar systems rely on large antenna arrays and complex algorithms to estimate angles, Eyedar’s physical design does most of the computation work typically required for direction finding ⎯ one of the most power- and data-intensive tasks in radar processing.

“Our lens consists of over 8,000 uniquely shaped, extremely small elements with a varying refractive index,” Cho said.

Through an intentional distribution of these elements, the lens structure interacts with incoming radar signals, routing them to the right spot on the antenna array. The approach has proved fruitful: in testing, Eyedar was able to resolve target directions more than 200 times faster than traditional radar designs.

Moreover, Eyedar communicates what it sees without transmitting new signals. Instead, the sensor alternates between absorbing incoming radar waves and reflecting them back to the source radar in a form it can interpret as a sequence of zeros and ones.

“Eyedar is a talking sensor,” Cho said, “It is a first instance of integrating radar sensing and communication functionality in a single design.”

This combination of sensing and communication in a compact, inexpensive, low-power architecture makes it feasible to deploy large numbers of sensors across roadways. In the case of self-driving cars, the system could be particularly suited to dense, high-traffic urban settings. However, the potential application space is much wider: Eyedar could be integrated into robots, drones and wearable platforms. Networks of these sensors could also share information with one another, allowing each device to see well beyond its own range of sight.

In related news, Forterra selects Aeva 4D lidar for autonomous vehicle system