Andrej Karpathy, senior director of AI at Tesla, recently outlined details of the in-house supercomputer the auto maker is using to train deep neural networks for Autopilot and self-driving capabilities. The cluster uses 720 nodes of 8x Nvidia A100 Tensor Core GPUs (5,760 GPUs total) to achieve a claimed industry-leading 1.8 exaflops of performance. “This is a really incredible supercomputer,” Karpathy said. “I actually believe that in terms of flops, this is roughly the No. 5 supercomputer in the world.”

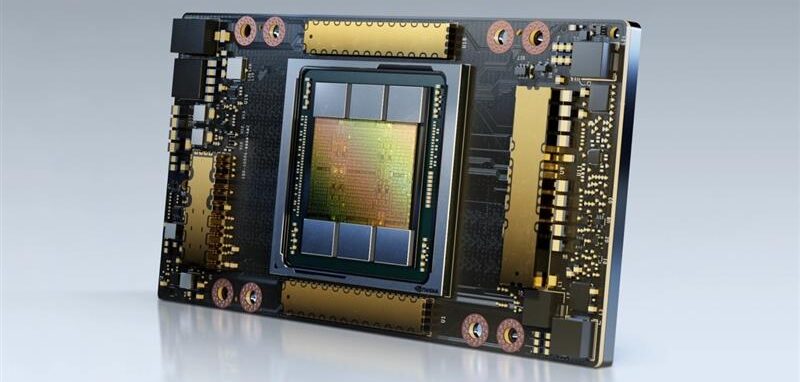

Powered by the Nvidia Ampere Architecture, the A100 GPU provides up to 20x higher performance over the prior generation and can be partitioned into seven GPU instances to dynamically adjust to shifting demands.

The GPU cluster is part of Tesla’s vertically integrated autonomous driving approach, which uses customer car data to inform and improve new features. Tesla’s cyclical development begins in the car, where a deep neural network (DNN) running in ‘shadow mode’ perceives and makes predictions while the car is driving, without actually controlling the vehicle. These predictions are recorded and any mistakes or misidentifications are logged. Tesla engineers then use these instances to create a training dataset of difficult and diverse scenarios to refine the DNN.

The result is a collection of roughly 1 million 10-second clips recorded at 36fps, totaling 1.5PB of data. The DNN is then run through these scenarios in the data center over and over until it operates without a mistake. Finally, it is sent back to the vehicle and begins the process again.

Karpathy said that training a DNN in this manner and on such a large amount of data requires “a huge amount of compute”, which led Tesla to build and deploy the current-generation supercomputer with high-performance A100 GPUs.

He added that the current DNN structure the auto maker is deploying allows a team of 20 engineers to work on a single network at once, isolating different features for parallel development. These DNNs can then be run through training data sets at speeds faster than what has previously been possible for rapid iteration. “Computer vision is the bread and butter of what we do and enables Autopilot. For that to work, you need to train a massive neural network and experiment a lot,” Karpathy concluded. “That’s why we’ve invested a lot into the compute.”